Regression is a powerful tool. Fortunately, regressions can be calculated easily in SPSS. This page is a brief lesson on how to calculate a regression in SPSS. As always, if you have any questions, please email me at MHoward@SouthAlabama.edu!

The typical type of regression is a linear regression, which identifies a linear relationship between predictor(s) and an outcome. In other words, a regression can tell you the relatedness of one or many predictors with a single outcome. Regression also tests each of these relationships while controlling for the other predictors, and it can be used to answer the following questions and similar others:

- What is the relationship of job satisfaction and leader ability in predicting employee job satisfaction?

- What is the relationship of hours studied and test grades?

- What is the relationship between NBA player height, weight, wingspan and the number of points scored per game?

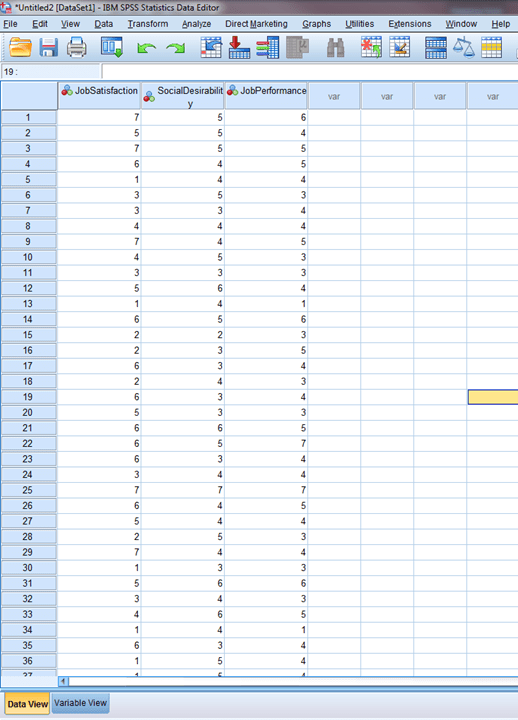

Of course, there is more nuance to regression, but we will keep it simple. To answer these questions, we can use SPSS to calculate a regression equation. If you don’t have a dataset, you can download the example dataset here. In the dataset, we are investigating the relationships of job satisfaction and social desirability with job performance.

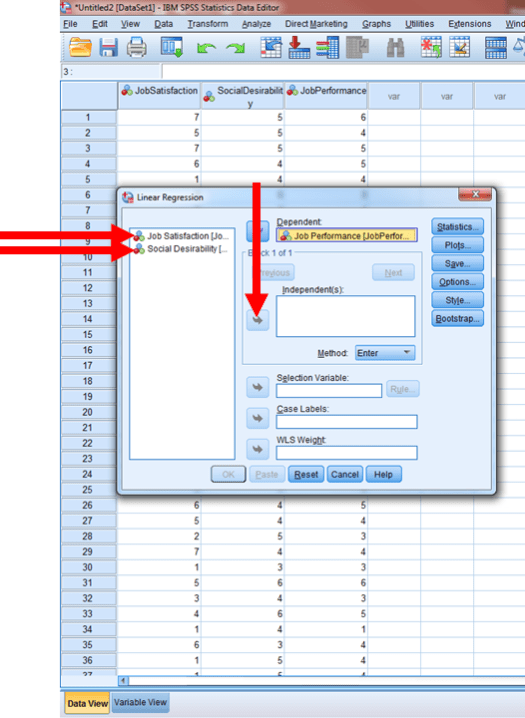

The data should look something like this:

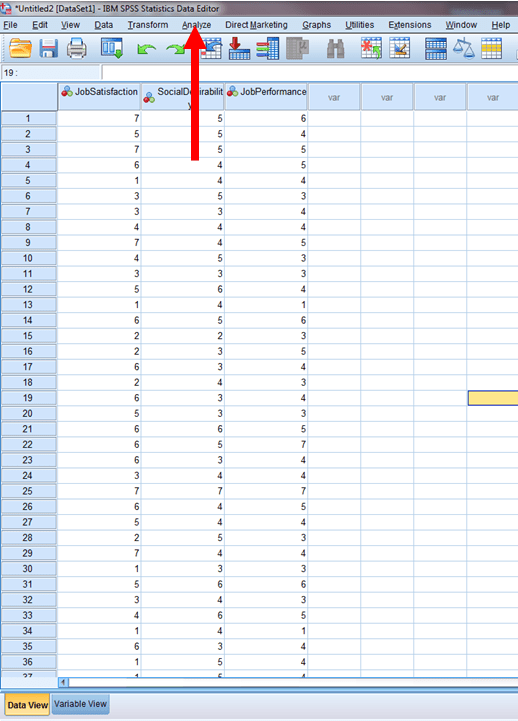

First, click on Analyze.

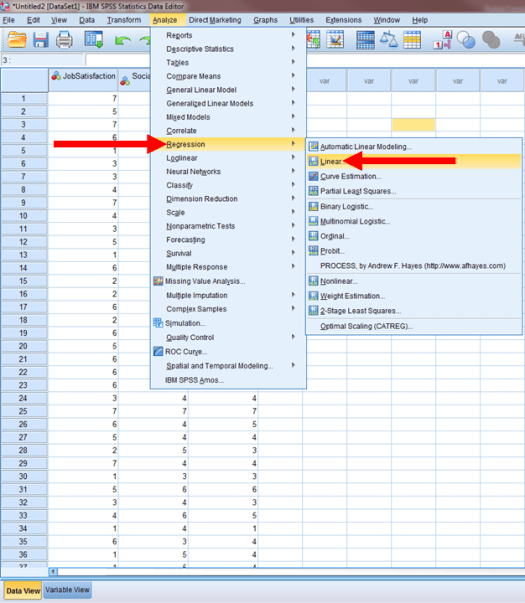

Then, click on Regression and then Linear…

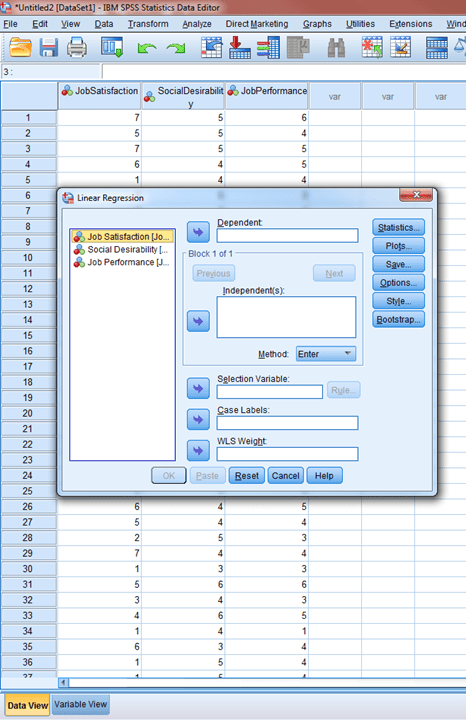

You should now have a window like the one below.

From this window, click on your outcome variable and then the arrow next to Dependent. This identifies which variable is your outcome variable.

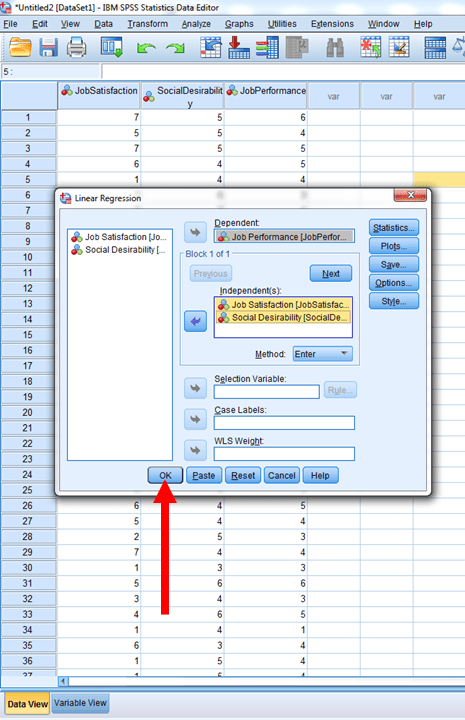

Then, click on your predictor variables and add them to the window labeled independent(s). This identifies your predictor variables.

To Finish, press OK.

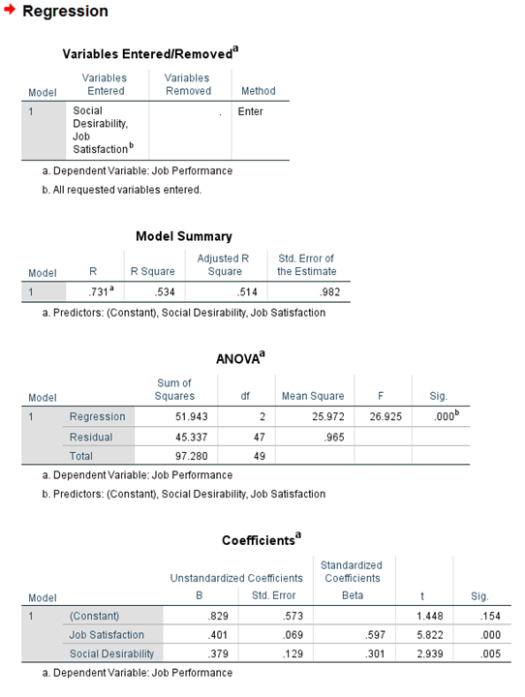

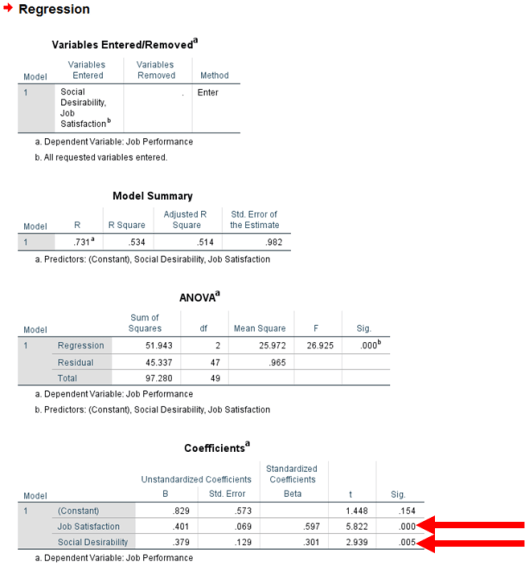

We should get results that look like the output below.

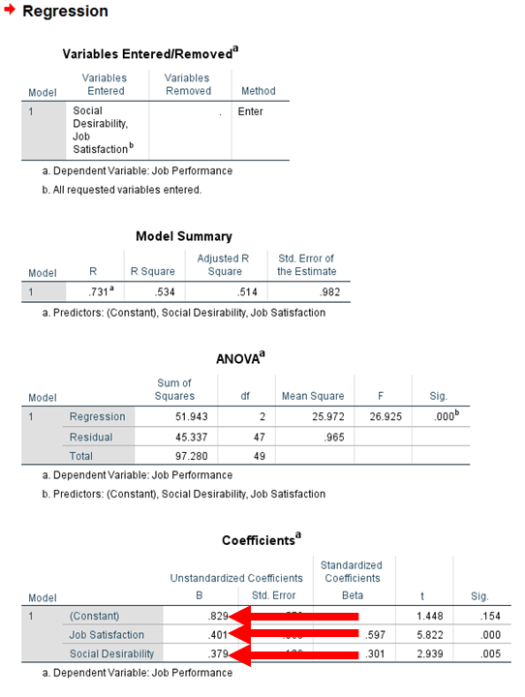

When reading the table below, we can look at the unstandardized coefficient B column to find the associated unstandardized beta values. The unstandardized beta value of the intercept is .829, the unstandardized beta value of job satisfaction is .401, and the unstandardized beta value of social desirability is .379.

Also, we can use this table to identify the significance of our predictors. Both job satisfaction and social desirability were significant at a p < .001 and a p < .01 level, respectively.

Lastly, we can see that the R-Square of the model is .53.

Together, the regression equation for these results is: y = .829 + .401(JS) + .379(SD); both job satisfaction and social desirability were statistically significant (p < .001 & < .01, respectively); and the total R-Square was .53 for the model. Of course, the results provide other information, which may be useful for your certain purposes, but the current guide just covers the basics.

Now you should be able to perform a regression in SPSS. As always, if you have any questions or comments, please email me a MHoward@SouthAlabama.edu!